You know that feeling. It’s Friday afternoon, and your PM drops a "quick favor" into your Slack. They need a list of every product, price, and review from a competitor’s site—by Monday. You check for an API. Nothing. You check for a public dataset. Crickets.

That’s when it hits you: you’re going to have to build a web scraper.

To the uninitiated, web scraping sounds like a dark art or a shortcut to getting blacklisted by half the internet. To a developer, it’s often just a necessary evil—a way to bridge the gap between "the data exists" and "the data is in a format I can actually use."

In this guide, we’re going to peel back the curtain. We’ll look at what a web scraper is at its core, how the modern web has made scraping significantly harder, and how you can move from "fighting with CSS selectors" to "actually using structured data."

What is a Web Scraper, Really?

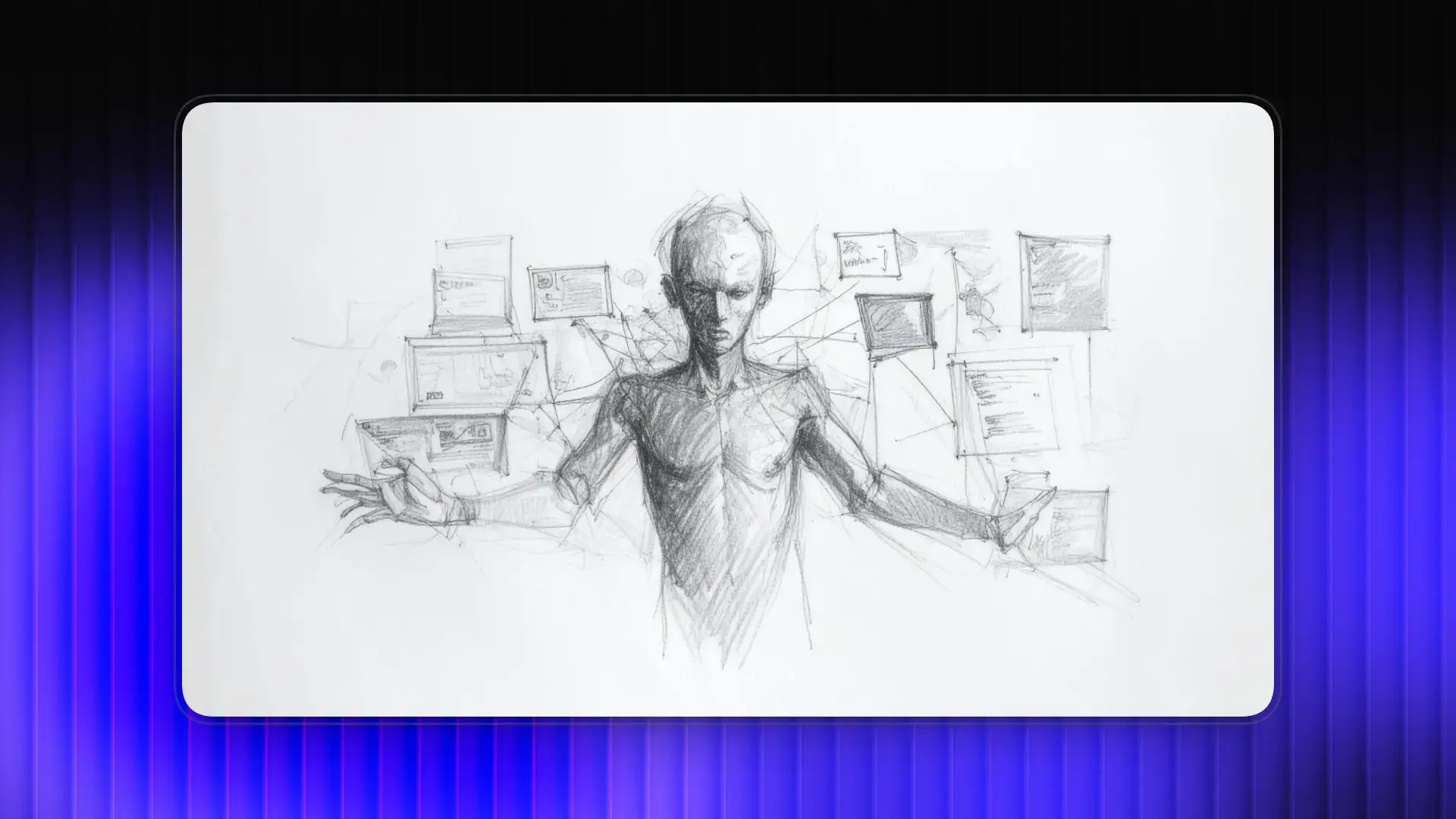

At its most fundamental level, a web scraper is a script or program that automates the process of browsing the web to extract specific information.

Think of it as an Invisible Intern. If you hired an intern, you’d tell them: "Go to this URL, find the price in the blue box, copy it into this spreadsheet, and repeat for these 500 pages." A web scraper does exactly that, but at the speed of your processor and without the need for coffee breaks or vaping at an Elf Bar.

The Lifecycle of a Scrape

Every scraper, whether it’s a 10-line Python script or a massive enterprise engine, follows the same basic loop:

Request: The scraper sends an HTTP request (usually a

GET) to a server.Fetch: The server sends back the page content (HTML, JSON, or a JS bundle).

Parse: The scraper "reads" the code, seeking specific patterns or tags.

Extract: It pulls the specific data points—like a product name or a stock price.

Store: It saves that data into a database, a CSV, or a JSON file.

The Evolution of the Web (and why your old scripts are failing)

If you’ve been in the game for a while, you remember the "Golden Age" of scraping. You could just use curl or a simple library to grab a static HTML file, regex out what you required, and call it a day.

But the modern web is a different beast. We’ve moved from static pages to Single Page Applications (SPAs) built with React, Vue, and Angular.

The "Empty Shell" Problem

When you request a modern site today, the server often sends back an almost empty HTML file. It looks something like this:

<div id="root"></div>

<script src="/app.bundle.js"></script>If your scraper just looks at the initial HTML, it sees... nothing. The actual content doesn't exist until the JavaScript executes and fetches data from an internal API.

This has forced developers to move from simple HTTP clients to Headless Browsers (like Puppeteer or Playwright) that actually "render" the page like a human would.

Building Your First Scraper: A Practical Python Example

Let’s get our hands dirty. For static or server-side rendered sites, Python remains the king of scraping, thanks to the BeautifulSoup library.

Imagine we want to scrape a simple book directory to get titles and prices. Here is a pragmatic, working example:

import requests

from bs4 import BeautifulSoup

def scrape_books(url):

# 1. The Request: Always include a User-Agent to look like a real browser!

headers = {

'User-Agent': 'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/91.0.4472.124 Safari/537.36'

}

try:

response = requests.get(url, headers=headers, timeout=10)

response.raise_for_status() # Check if the request actually worked

# 2. The Parse: Turning raw HTML into a searchable tree

soup = BeautifulSoup(response.text, 'html.parser')

books = []

# 3. The Extraction: Finding the specific tags

# Let's say each book is in an <article class="product_pod">

for product in soup.find_all('article', class_='product_pod'):

title = product.h3.a['title']

price = product.find('p', class_='price_color').text

books.append({

'title': title,

'price': price.strip()

})

return books

except Exception as e:

print(f"An error occurred: {e}")

return []

# Usage

# data = scrape_books('http://books.toscrape.com/')

# print(data)Why this works (and why it’s fragile):

User-Agent: Without this, many servers will see "Python-requests/2.25" and block you immediately.

CSS Selectors: We are relying on

class_='product_pod'.The Trap: What happens if the site owner changes that class name to

item-containertomorrow? Your script breaks. This is the Maintenance Trap, and it’s the primary reason developers grow to hate scraping.

Common Challenges: Why Scraping is Harder Than It Looks

If you're building this for a production environment, you're going to hit walls. Fast. Here are the "boss fights" of web scraping:

1. Rate Limiting & IP Blocking

Servers don't like being hit 1,000 times a second. They will throttle your connection or block your IP entirely. Developers usually solve this with Proxy Rotation, which is expensive and a headache to manage.

2. CAPTCHAs

The "Are you a robot?" checkbox is the bane of our existence. While there are solving services, they add latency and cost to your pipeline.

3. Infinite Scroll & Pagination

Many sites don't have "Page 2" links anymore. You have to simulate a user scrolling down to trigger a JavaScript event that loads more data. This requires a full browser instance, which consumes massive amounts of RAM.

4. Data Normalization

The web is messy. One price might be $10.00, another might be 10 USD, and another might be Contact for Quote. Turning that "dirty" HTML into clean, type-safe JSON is often more work than the scraping itself.

The "Maintenance Trap": A Story of Brittle Code

I once spent an entire week building a scraper for a real estate site. I mapped out every div, every nested span, and every weirdly named ID. It was a masterpiece of XPath and Regex.

I pushed it to production on Friday. On Saturday morning, the real estate site did a major UI overhaul. They switched to a CSS-in-JS framework where class names were randomly generated strings like _1a2b3.

My "masterpiece" was now garbage.

This is the reality of traditional scraping: You are at the mercy of the target site’s front-end developers. Every time they ship a feature, they might accidentally break your business logic.

A Better Way: Moving Toward Structured Web Data

As developers, we shouldn't be spending our time debugging why a .price-value class changed to .price-amount. Our time is better spent using the data to build features.

This realization is leading many teams to move away from "hand-rolled" scrapers and toward solutions that treat the web like an API.

Introducing ManyPI: Any Website, One API

This is precisely where ManyPI comes in. Instead of you writing the request logic, the proxy rotation, the headless browser management, and the fragile selectors, ManyPI turns any website into a type-safe API within seconds.

It essentially abstracts the "messy" parts of the web. You tell it what URL you want and what data you need, and it returns structured, clean JSON.

How it looks in your code:

Instead of a 50-line script with BeautifulSoup or Playwright, you can just perform a standard API call:

curl -X POST

'https://app.manypi.com/api/scrape/YOUR_API_ENDPOINT_ID'

-H 'Authorization: Bearer YOUR_API_KEY'

-H 'Content-Type: application/json' Why this is a game-changer for devs:

Type-Safety: You define a schema (e.g.,

current_price: "number"), and you get exactly that. No more manual string parsing.Zero Infrastructure: You don't have to maintain a cluster of Dockerized Headless Chromes.

Resilience: ManyPI uses sophisticated logic to find the data even if the underlying HTML structure shifts slightly.

Best Practices for Ethical (and Successful) Scraping

Whether you're rolling your own or using a service like ManyPI, there’s an "unwritten code" to being a good web citizen:

Check the

robots.txt: Most sites have a/robots.txtfile that tells you which paths are off-limits. Respect it.Don't DDoS the Target: Use reasonable delays between requests. If you're using a service, it usually handles this "politeness" for you.

Identify Yourself: Use a User-Agent or a

Fromheader that provides a way for the site owner to contact you if your script is causing issues.Use the Data Responsibly: Be aware of copyright and terms of service. Scraping public data is generally legal in many jurisdictions (like the US, following the HiQ v. LinkedIn case), but using it to rebuild a competitor’s exact product is a legal gray area.

The Takeaway

Web scraping is a foundational skill for the modern developer. It’s the ultimate "power user" move—turning the unstructured chaos of the internet into a structured database you can query.

But as we’ve seen, the gap between a "working script" and a "reliable production pipeline" is huge.

My recommendation?

If you're learning, build a script from scratch using Python or Node.js. It will teach you more about the DOM and HTTP than any textbook.

If you're building a product that depends on that data, don't build the scraper. Use a tool like ManyPI to turn that website into a type-safe API. Save your "engineering brain" for the problems that actually move the needle for your project.

Written by

Ole Mai

Founder / ManyPI