Parsing a website sounds like a fundamental CS101 task. In reality, it’s one of the most volatile, maintenance-heavy challenges in modern software engineering. The web wasn't designed to be a database; it was designed to be a visual experience.

In this guide, we’re going to look at the anatomy of web parsing, why the "old way" is failing, and how to build a data pipeline that doesn't crumble every time a designer changes a button color.

What Does "Parsing a Website" Actually Mean?

At its simplest level, parsing is the process of taking a string of data (usually HTML) and converting it into a structured format (like JSON or an Object) that your application can actually use.

But here is where it gets tricky. There are actually three distinct steps in a modern parsing workflow:

Retrieval: Fetching the raw code from a server.

Rendering: Executing any JavaScript to reveal the data that isn't in the initial source code.

Extraction: Identifying the specific "bits" of data (the signal) amidst the "noise" (ads, navigation, tracking scripts).

The Manual Approach: Using BeautifulSoup and Python

For many developers, the journey begins with Python. It’s the "Swiss Army Knife" of data, and libraries like BeautifulSoup are the gold standard for parsing static HTML.

Let’s look at a basic example. Suppose we want to extract article titles from a news blog.

import requests

from bs4 import BeautifulSoup

def parse_blog(url):

# Step 1: Retrieval

response = requests.get(url)

if response.status_code != 200:

print("Failed to retrieve the page")

return []

# Step 2: Extraction

soup = BeautifulSoup(response.text, 'html.parser')

# We find all <h2> tags that contain our article titles

articles = []

for header in soup.find_all('h2', class_='post-title'):

title = header.get_text(strip=True)

link = header.find('a')['href'] if header.find('a') else None

articles.append({"title": title, "link": link})

return articles

# Usage

# data = parse_blog("https://tech-blog-example.com")

# print(data)Why this code is "Dangerous"

On a static site, this code is beautiful. It’s readable. It’s fast. But in a production environment, it has several fatal flaws:

The "Blank Page" Problem: If the blog uses React or Next.js,

requests.getwill likely return an empty<body>tag because the content hasn't been rendered by the browser yet.Selector Fragility: The moment the developer changes

class='post-title'toclass='article-header', your script returns an empty list.No Type Safety: Is the "link" always a valid URL? Is the "title" always a string? You have to write extensive validation logic to make this data usable in a database.

The "Modern" Wall: JavaScript and Anti-Bot Measures

If you’ve tried to parse a site like Amazon, LinkedIn, or any modern SaaS, you know that BeautifulSoup isn't enough. You run into the two Great Walls of Web Parsing:

1. The Single Page App (SPA) Wall

Modern websites are essentially applications running inside the browser. To parse them, you have to run a full browser instance (Headless Chrome) using tools like Puppeteer or Playwright. This allows you to wait for the JavaScript to execute and the data to actually appear on the screen.

2. The Anti-Scraping Wall

Websites don't necessarily want to be parsed. They use services like Cloudflare or Akamai to detect bot-like behavior. If you make ten requests in a row from your laptop, you’ll likely see a "403 Forbidden" or a CAPTCHA.

To bypass this, you need a rotation of residential proxies, header spoofing (to make you look like a real user on a MacBook), and "stealth" plugins to hide the fact that you're using a headless browser.

The Hidden Cost of Maintenance (The "Developer Tax")

Let’s be honest: building a parser isn't the hard part. Maintaining a parser is the hard part.

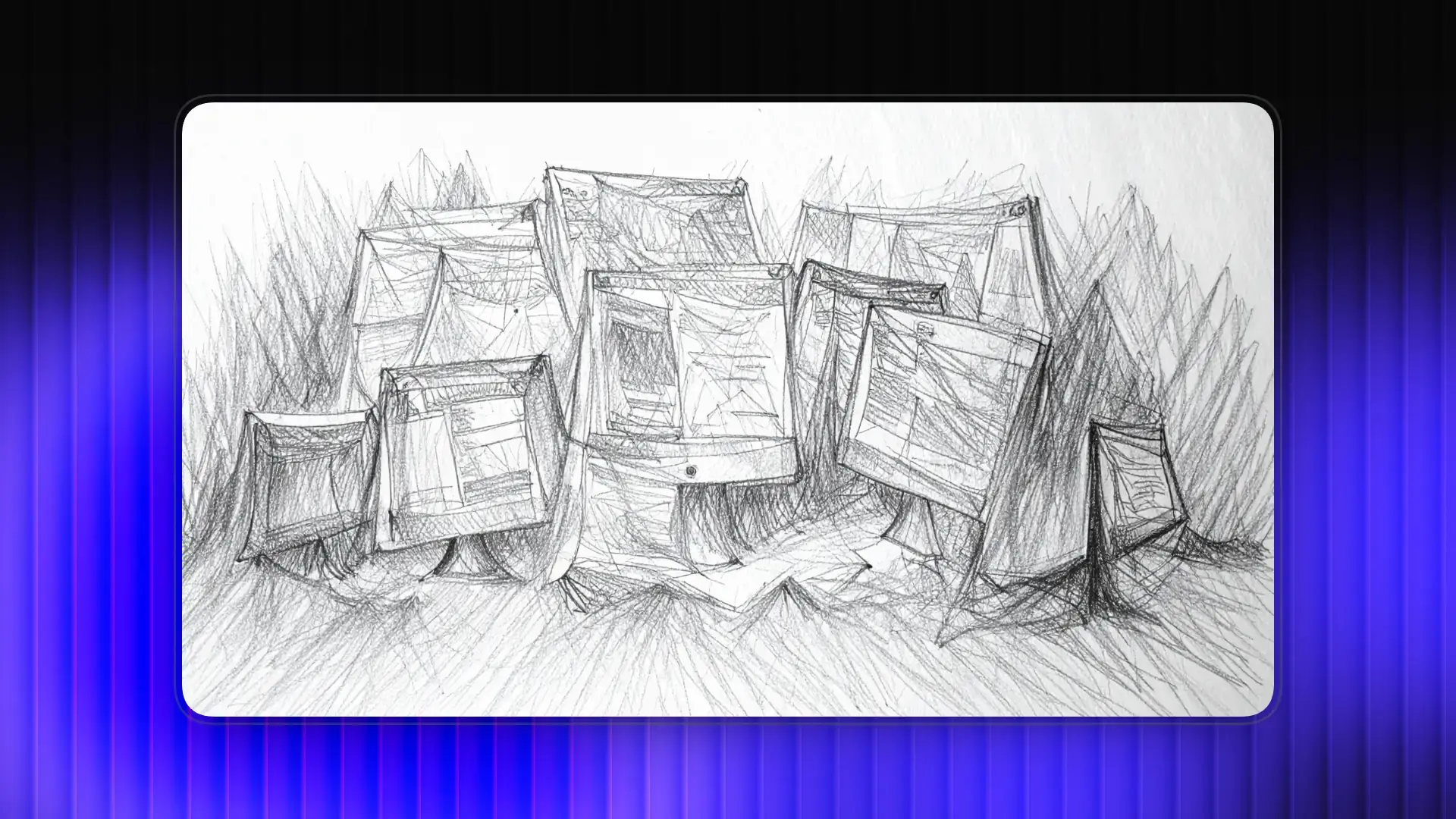

I once worked on a project that required parsing data from 50 different real estate sites. We spent roughly 20 hours a week just fixing broken CSS selectors. Every time a site did a "minor UI refresh," our data pipeline would clog.

We were essentially playing a never-ending game of Whac-A-Mole.

The Reality Check: Most developers overestimate the cost of a tool and underestimate the cost of their own time. If you spend 5 hours a month fixing a custom parser, that’s hundreds of dollars in "hidden" salary costs.

A Better Way: Turning Any Website into a Type-Safe API

What if we stopped manually hunting for CSS selectors? What if we could treat the entire web as a giant, structured database?

This is the philosophy behind ManyPI. Instead of you managing Playwright instances, proxy pools, and fragile BeautifulSoup logic, ManyPI allows you to define the data you want and get it back as a clean, type-safe API response.

It essentially turns "Parsing" from a coding task into a data request.

Practical Example: Turning a Website into an API in Seconds

Imagine you need to get product details from a complex e-commerce site. Instead of writing 100 lines of Playwright code, you send a single POST request to ManyPI.

curl -X POST

'https://app.manypi.com/api/scrape/YOUR_API_ENDPOINT_ID'

-H 'Authorization: Bearer YOUR_API_KEY'

-H 'Content-Type: application/json' Why this is a game-changer for engineering teams:

Instant Schema Mapping: ManyPI uses advanced extraction models to find the "price" and "name" regardless of whether the site uses

<span>,<div>, or<h1>.Automatic Type Conversion: Notice how we asked for

priceas a"number"? ManyPI handles the heavy lifting of stripping out currency symbols ($) and commas (,) so your database doesn't reject the input.Zero Infrastructure: No more managing headless browsers or proxy credits. It’s all handled under the hood.

Best Practices for Scaling Your Web Parsing

If you’re building a parsing pipeline—whether you’re doing it manually or using a tool like ManyPI—here are the "battle-tested" rules of thumb:

1. Decouple Extraction from Processing

Never parse a website directly into your main database. Always use a "landing" zone (like a staging table or a JSON file). This allows you to inspect the data and ensure it's not garbage before it hits your production systems.

2. Implement Semantic Validation

Just because a parser returns data doesn't mean it’s correct data.

Manual Tip: Use Pydantic in Python to validate that a price is positive.

Managed Tip: Tools like ManyPI return structured JSON, making it easy to run automated checks against your expected types.

3. Graceful Failures and Retries

The web is "eventually consistent." Sometimes a page takes 10 seconds to load instead of 2. Your parser should include an exponential backoff retry strategy. If it fails on the first try, wait 1 second, then 2, then 4.

4. Respect the Source

Even if you're using a high-powered tool, don't be a nuisance. If you're parsing a small mom-and-pop shop’s inventory, don't hit their server 1,000 times a second. Use caching to store results for a few hours.

Manual vs. Managed: Which One Should You Choose?

Feature | Manual (BeautifulSoup/Puppeteer) | Managed (ManyPI) |

Setup Time | Hours to Days | Seconds |

Maintenance | High (Breaks with UI changes) | Low (AI-driven resilience) |

Anti-Bot | You have to build/buy proxies | Included |

Data Quality | Raw strings (requires cleaning) | Structured, Type-safe JSON |

Best For | Learning/Small, static tasks | Production-grade data pipelines |

Conclusion: Stop Being a "DOM Archeologist"

As developers, our time is best spent solving unique problems—not debugging why a button on a third-party site is now a nested-div-3 instead of a button.

The traditional "manual" way of parsing a website is a rite of passage, and it's important to understand how the DOM works. But for anyone building a scalable product, that manual approach eventually becomes a bottleneck.

By moving toward structured data extraction and tools like ManyPI, you treat the web like the API it should have been. You get to focus on building features, and let the machines handle the chaotic, ever-shifting landscape of HTML.

Written by

Ole Mai

Founder / ManyPI